Wrong requests are a common source of either overspending or instability: the container assumes more CPU than the node can fairly provide, or memory limits invite OOM kills.

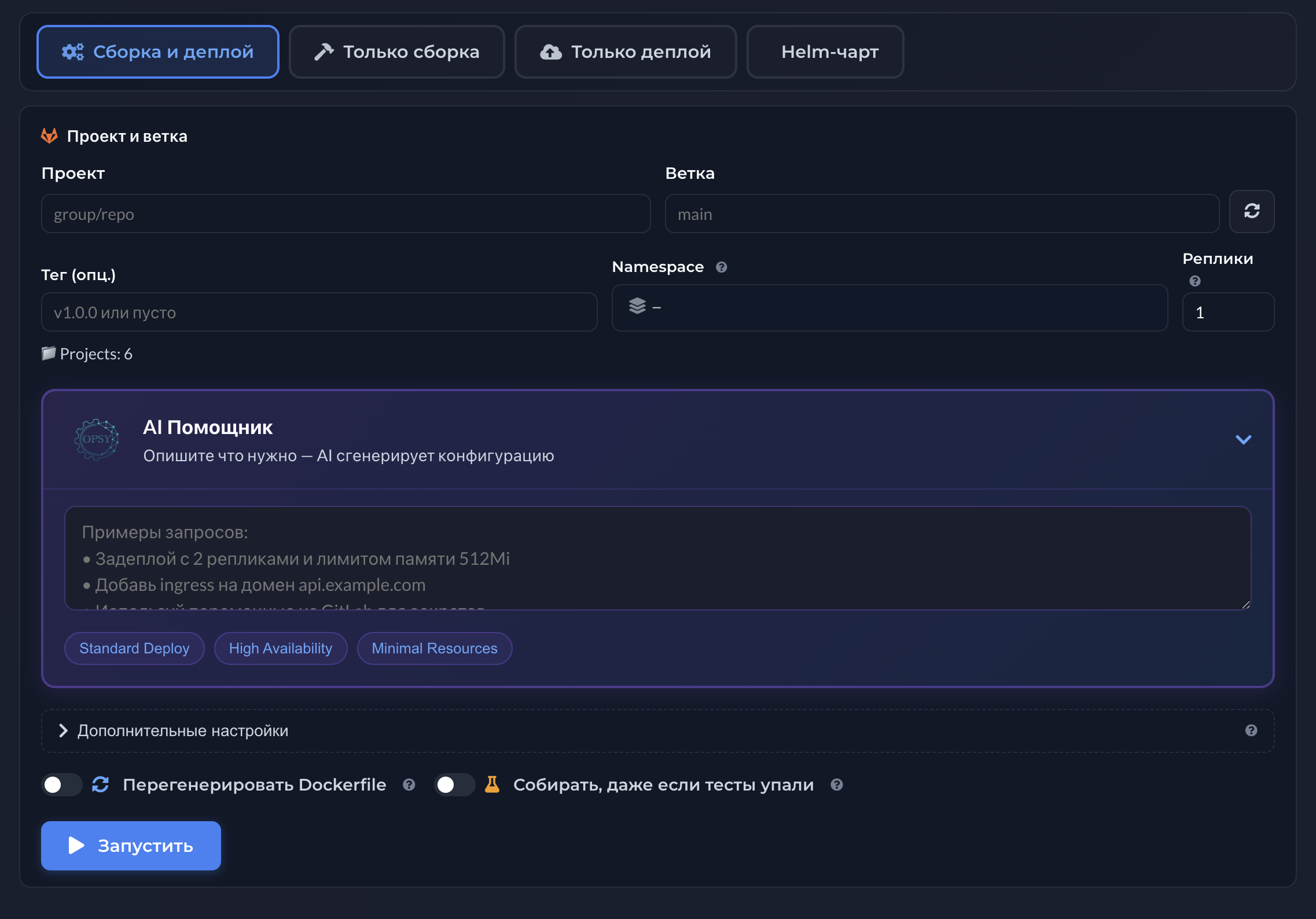

AI suggestions in Opsy are a starting point for engineering judgment, not autopilot. Teams cross-check with load profiles, SLOs, and budget; then apply changes in a controlled way.

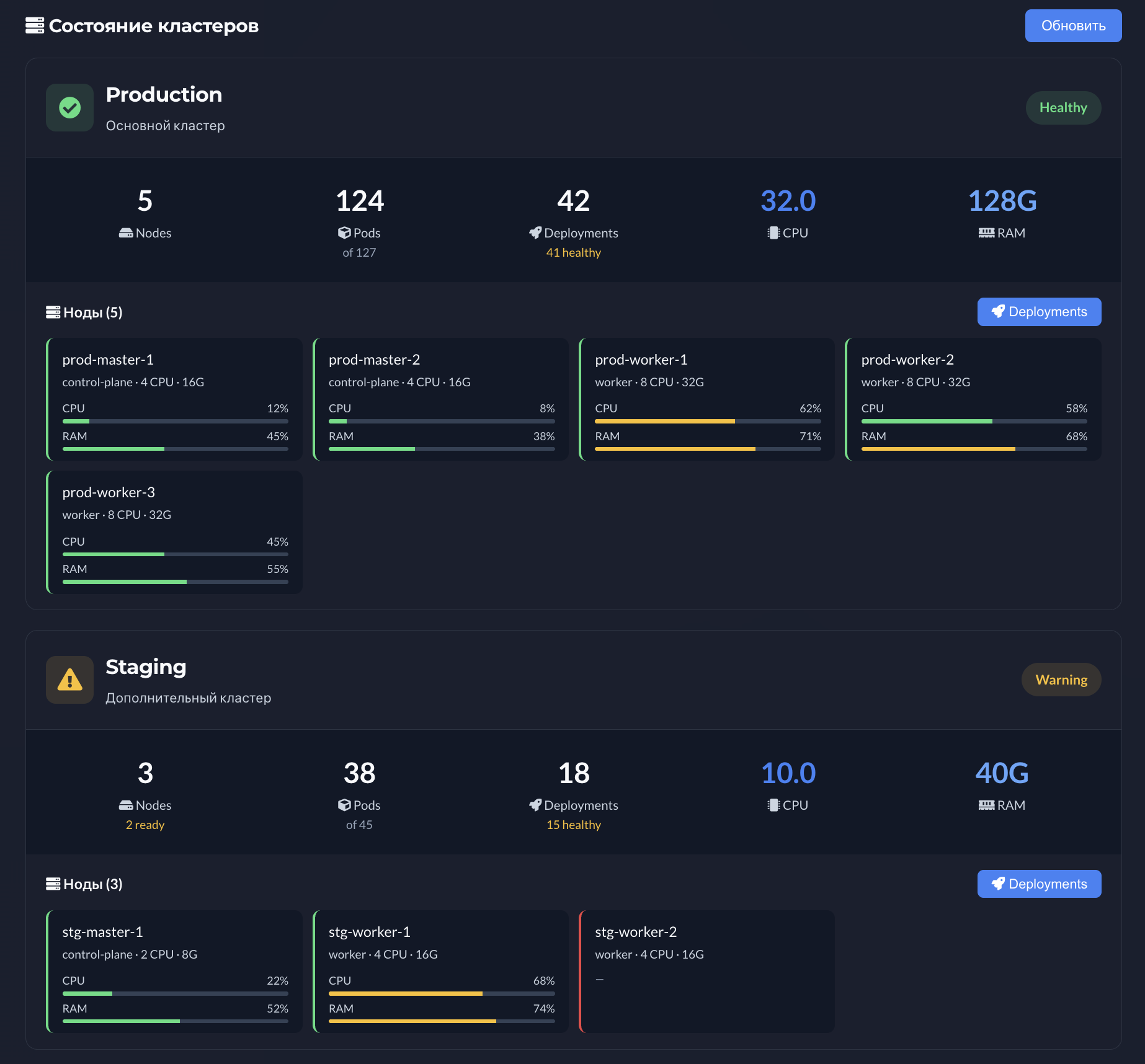

FinOps and engineering often speak different languages: invoices versus latency. Shared utilization views (see monitoring & metrics) and suggested bands help align without blame.

Requests and limits optimization loop in Kubernetes

Gather metrics → spot workloads with obvious headroom or risk → form a hypothesis → validate with load or canary tests (see AI deploy) → lock new limits. Opsy accelerates steps up to the hypothesis.

Right-sizing risks: throttling and OOM-kill

Aggressive CPU cuts can harm latency; low memory limits can kill Pods on spikes. Post-change observation and fast rollback matter.

Highlights

- CPU and memory hints grounded in observed usage

- Less eyeball padding and cluster waste

- Lower OOM risk from underestimated limits

- Shared vocabulary for engineering and FinOps

- Fits infrastructure change review practices

- Pairs with monitoring and release history

- Especially helpful as similar services multiply