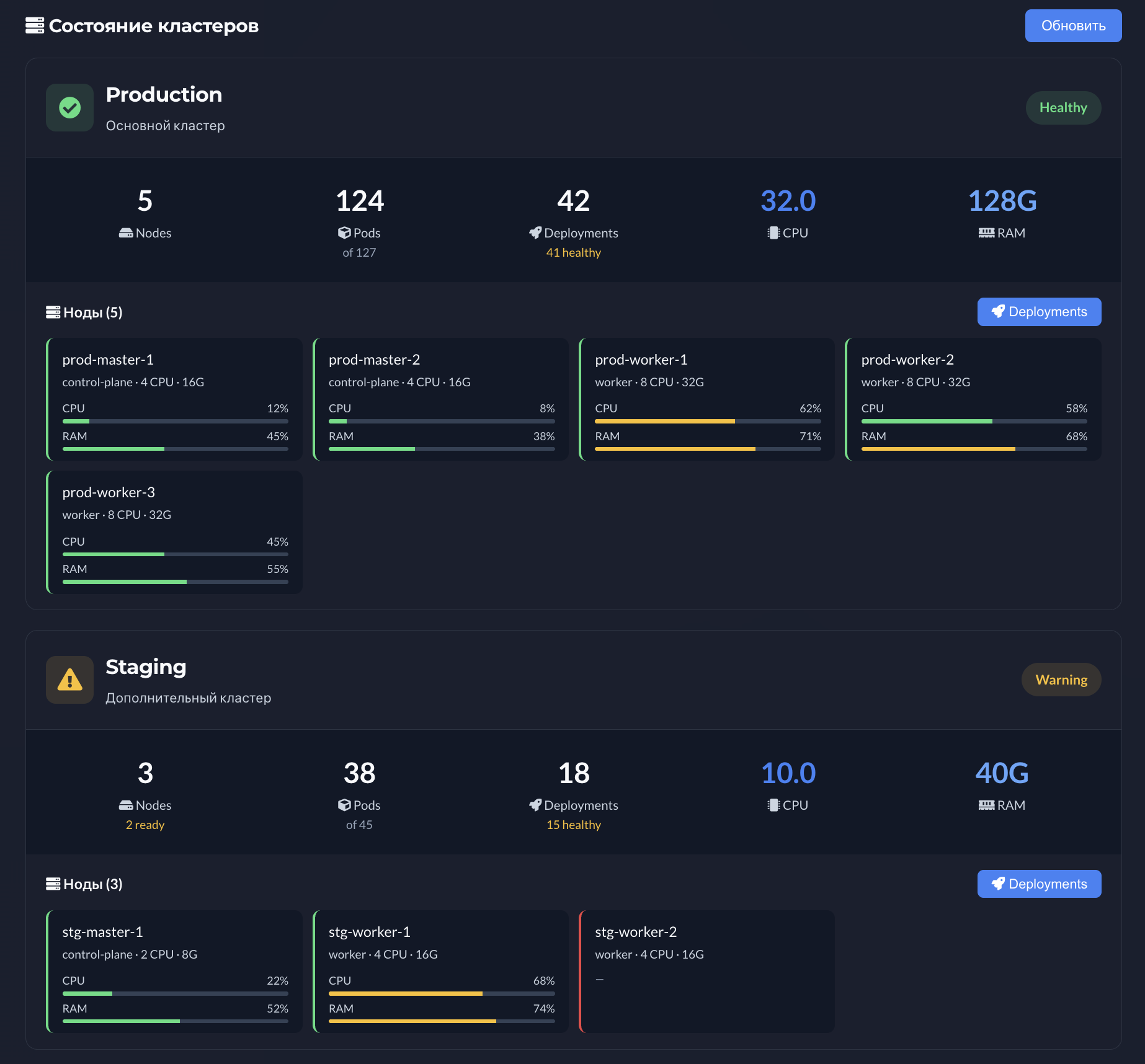

Metrics do not fix infrastructure by themselves, but without them tuning is guesswork. Cluster-level visibility helps separate “app bottleneck” from “node starvation.”

CPU and memory signals for nodes and workloads make the case for hardware purchases or autoscaling. It is also vocabulary for FinOps: fewer vague “we should optimize” statements, more grounded consumption views.

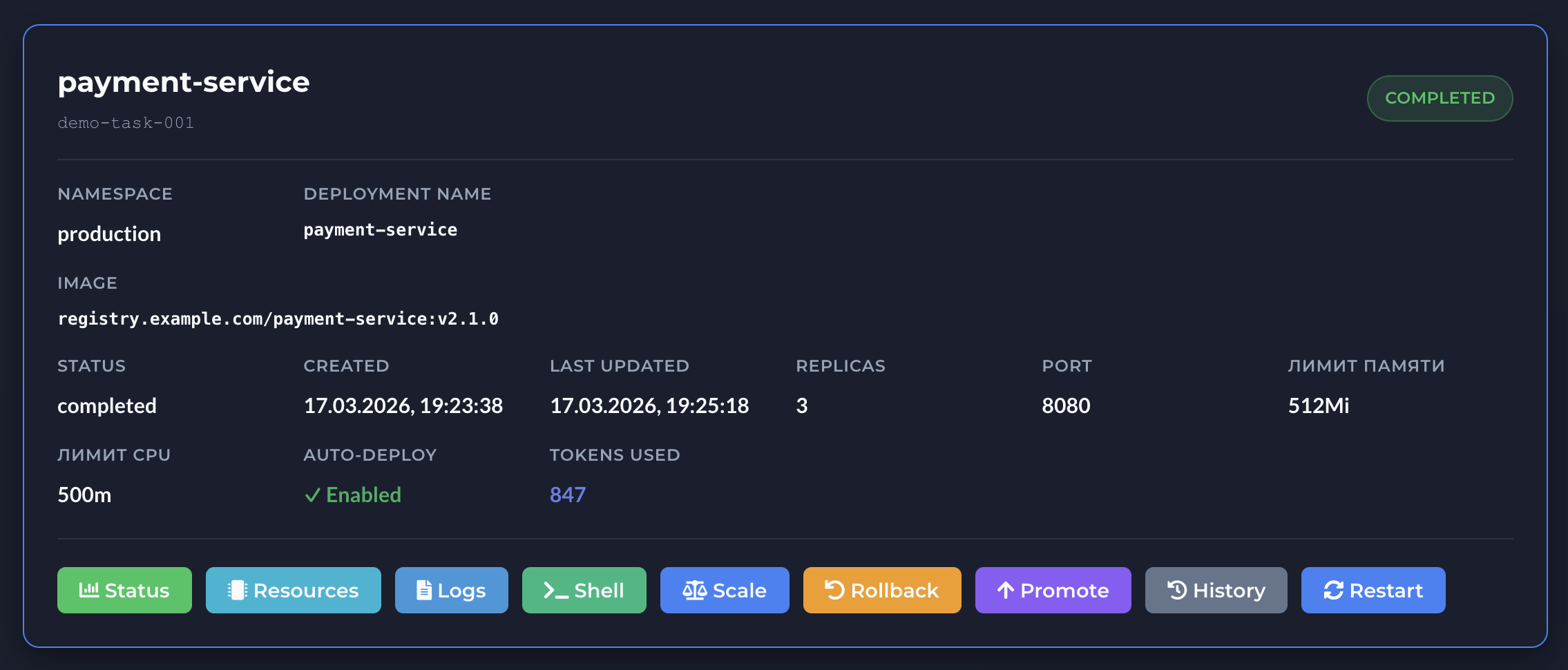

Pairing signals with requests/limits guidance makes the next step concrete - not “tweak something,” but “start in this band and validate with load tests.”

From Kubernetes metrics to concrete actions

A healthy loop: notice anomaly → narrow to service or node → check events and logs → decide on scale or config. Opsy is not a full APM replacement, but it shortens the path to first answers.

Incidents, capacity planning and audit

In postmortems, metrics help answer whether degradation started before or after a release. In planning, they justify whether the current node pool can handle seasonal peaks.

Highlights

- CPU and memory utilization across cluster and workloads

- Context for requests/limits and scaling choices

- Fewer unexplained throttling spikes and surprise OOMs

- Shared language for engineering and FinOps

- Connects to AI-assisted resource recommendations

- Faster hypotheses during performance incidents

- Fits teams without a dedicated 24/7 SRE bench