Kubernetes infrastructure optimization

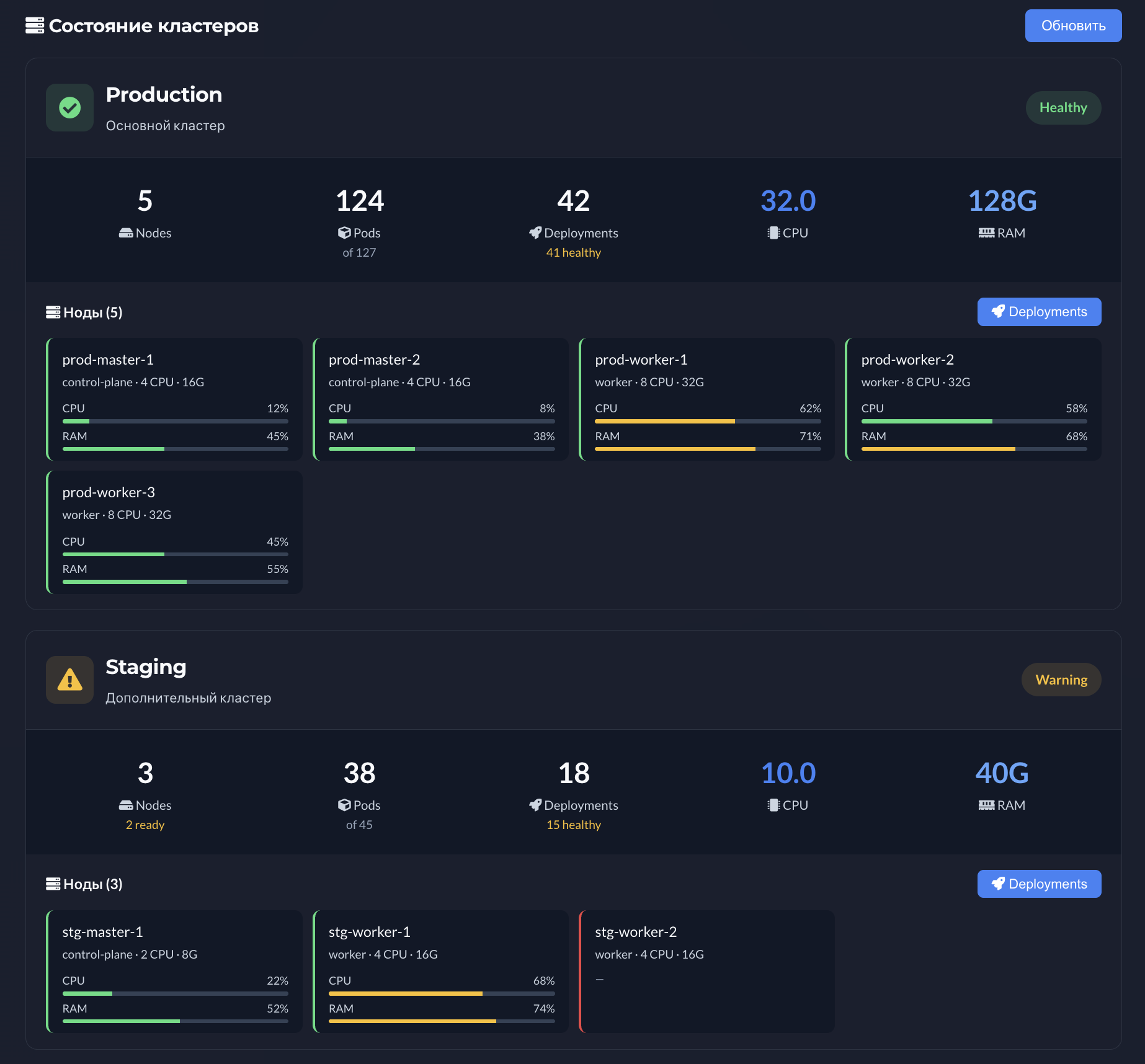

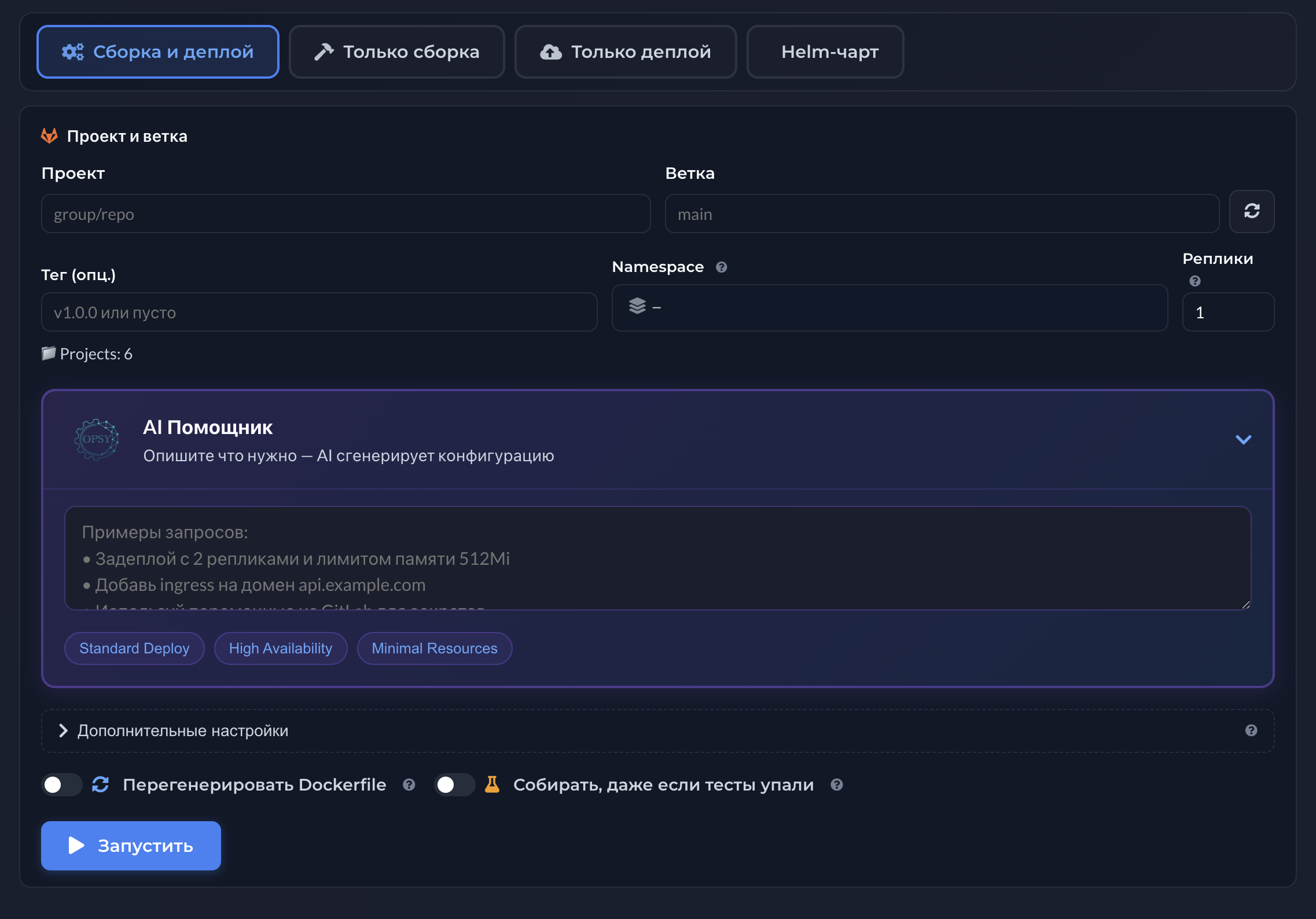

Opsy surfaces workload and utilization context to justify CPU/RAM changes.

Cluster cost grows with service count-requests and limits are often set generously while real usage is rarely revisited.

FinOps and engineering speak past each other without shared workload and node facts.

Aggressive cuts without utilization data raise the risk of throttling and OOMs during peaks.

Raw metrics without mapping to services and owners do not tell you whom to optimize first.

Surfaces node and workload context so requests and limits discussions rely on evidence, not gut feel.

Makes conversations between service owners and platform clearer-where waste lives and where bottlenecks are.

Methodology and UI depth are on the CPU / RAM optimization topic page.

Requests and limits affect scheduling, autoscaling, and node cost. Random cuts without utilization context create latency pain or evictions.

FinOps wants cost numbers while engineers care about QoS-without shared facts, talks stall.

Tuning one service without neighbor context on the node can fake progress while the node stays hot.

Screenshots below come from the related Opsy product topic. Click an image to open it full screen.