Bulk deploy to Kubernetes

With many services, one‑by‑one deploys become a bottleneck - Opsy provides one flow.

Platform and product teams juggle dozens or hundreds of workloads-shipping “one service per day” no longer matches business pace.

Parallel deploys without shared rules create cluster collisions and noisy cross-team coordination.

Plan and execute batch updates with clear ordering and an audit trail of who shipped what.

A shared language for dev and ops-the same scenario for critical and secondary services, with different policies but without per-repo magic.

Turns many rollouts into a governed flow: fewer repeated manual steps and fewer forgotten dependents.

History and rollback live at the operation layer-not scattered scripts.

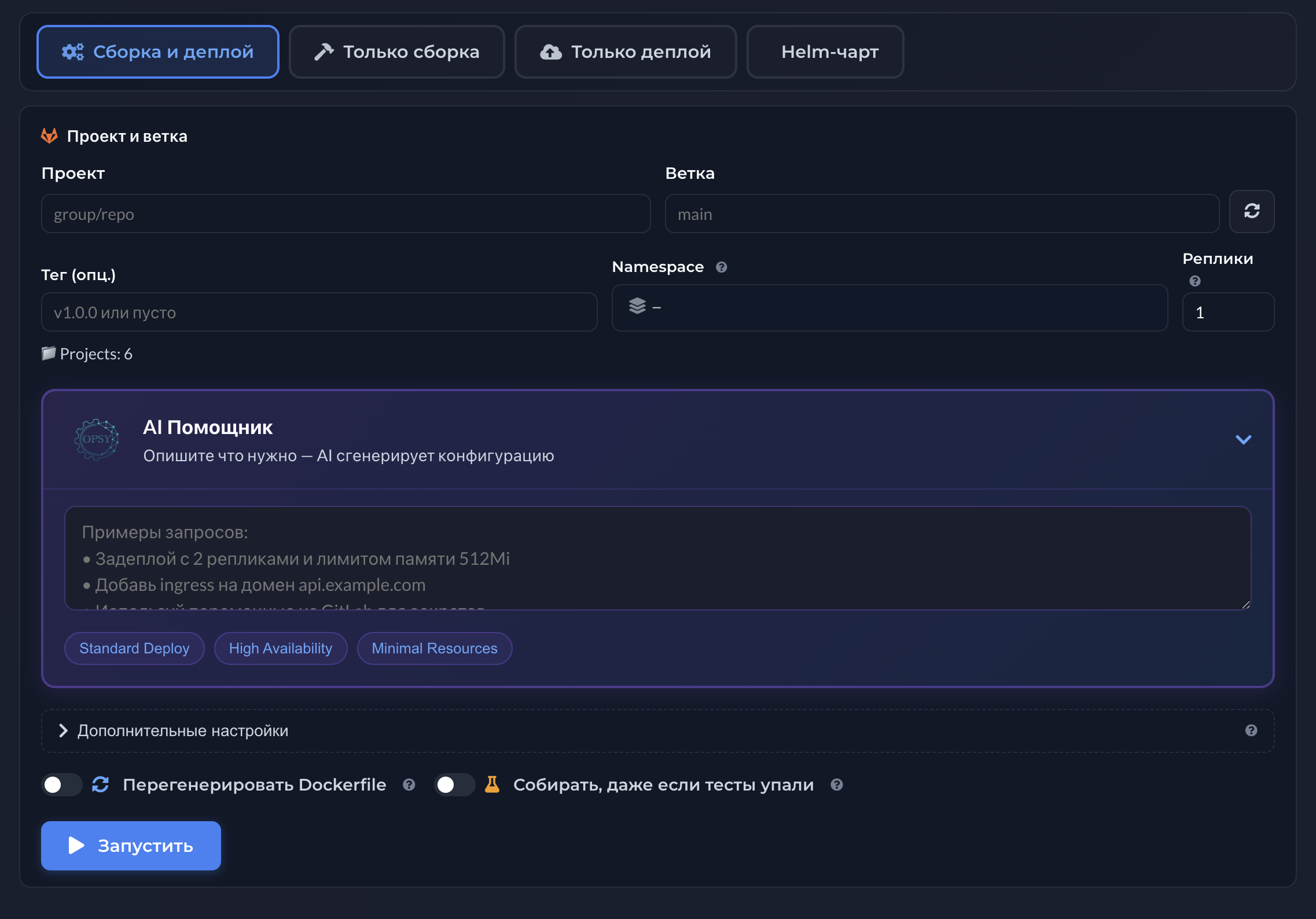

Deploy and AI-assisted capabilities are covered on the AI deploy topic page.

Release trains, cross-team dependencies, and coordinated changes break down if every deploy is its own manual ritual.

Parallel deploys without shared rules cause two services to fight for the same resources or break shared ingress/secrets.

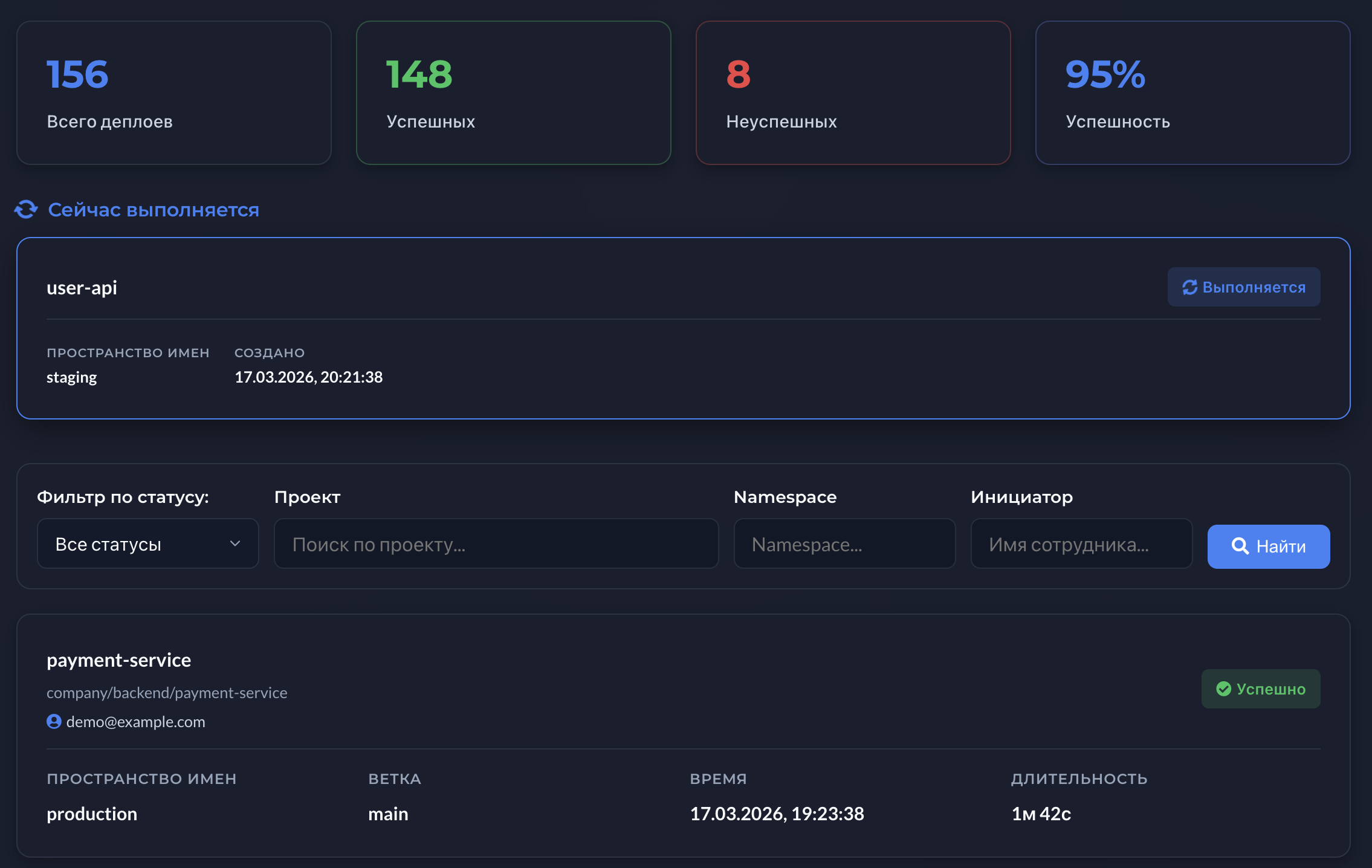

Platform teams need a view of what is rolling out across dozens of namespaces-otherwise on-call becomes guesswork.

Documenting bulk changes for postmortems is impossible if history only lives in personal terminals.

Start by defining who may initiate bulk deploys, which checks are mandatory beforehand, and how rollbacks are recorded.

Standardize service and environment descriptions-without that, bulk operations stay brittle.

After the process stabilizes, add accelerators like AI-assisted configuration without breaking controls.

Screenshots below come from the related Opsy product topic. Click an image to open it full screen.